Last fall, we published a four-part series on the challenges of quality control in high-mix, low-volume manufacturing — the setup overhead, the inconsistency of manual checks, and the documentation burden that comes with compliance. We outlined a direction: mobile, software-first, adaptable at production speed.

Six months later, that direction has a name. Inventor is now a production system. This article is a high-level overview of what it does and why it works. Future articles will zoom into specific layers — one-shot labeling, synthetic failure generation, the model cascade — in detail.

The thesis: compress the loop

The bottleneck in deploying computer vision on the factory floor was never the inference itself. Modern models are fast and accurate enough for most industrial inspection tasks. The bottleneck was everything around it: collecting training data, labeling it, training models, configuring the inspection logic, deploying to the floor, and then doing it all again when the next product variant arrives.

Inventor compresses that entire loop into a single, repeatable workflow that runs on hardware your teams already carry.

Why mobile

The decision to build on iPhone and iPad wasn’t a compromise — it was deliberate. Smartphones check every box for edge deployment in manufacturing.

They’re already present. Many factories have Apple Business Manager programs in place. Others have iPhones in every pocket. Either way, the device is not a procurement event.

They’re powerful enough. Current iPhones run CoreML inference on neural engines designed for exactly this class of workload. Detection, classification, and segmentation models run on-device at production speed with no cloud dependency.

They capture good data. The cameras are high-resolution, well-calibrated, and consistent enough to build training datasets from — no lab setup required. A quality engineer walks the line, records a few minutes of video per station, and the system has what it needs.

They increase reach. A phone goes inside an aircraft fuselage, around a curved composite panel, along a packing line. Inspection follows the work instead of the work coming to the inspection station.

The deployment infrastructure is mature. App distribution, updates, device management — Apple solved these problems years ago. We inherit all of it.

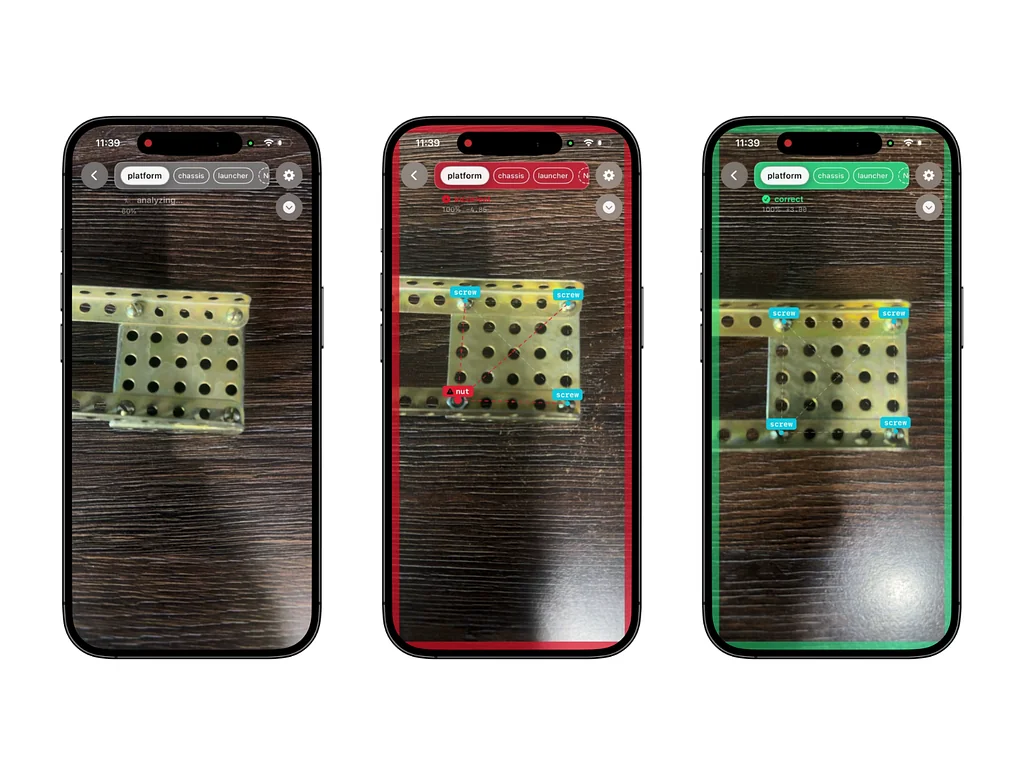

Inventor in action: inconclusive, incorrect, and correct configurations

What the pipeline does

The end-to-end workflow has five stages, each designed to remove a traditional bottleneck.

Data capture happens on the shop floor with the same app that later runs inspection. No separate tooling, no lab conditions.

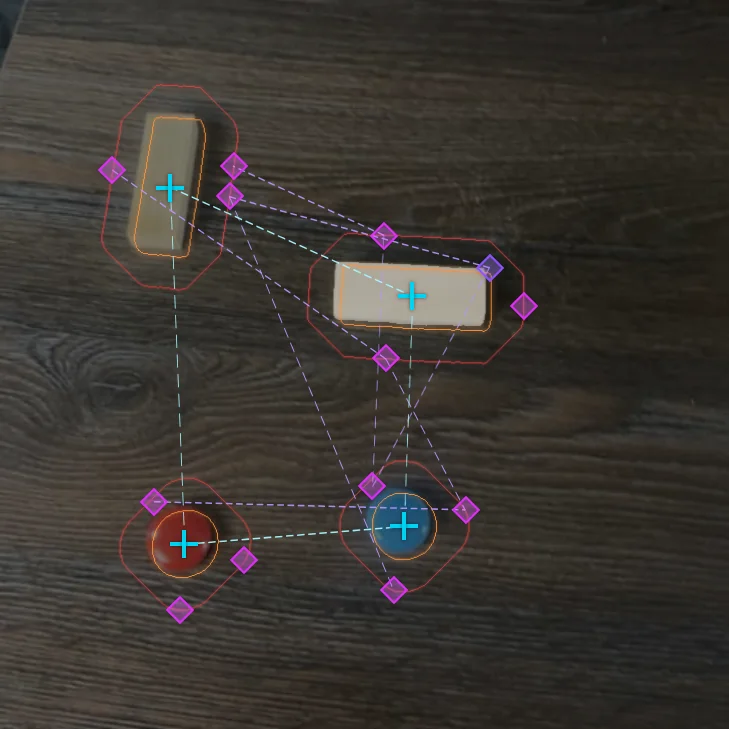

One-shot labeling eliminates the annotation bottleneck. An engineer labels a single reference frame; the system propagates annotations across the full dataset automatically.

Automated labeling in action

Synthetic failure generation solves the data problem for defects you rarely see. From a learned baseline of correct assemblies, the system generates hundreds of plausible failure configurations — missing parts, swapped components, misalignments — without physically staging each one.

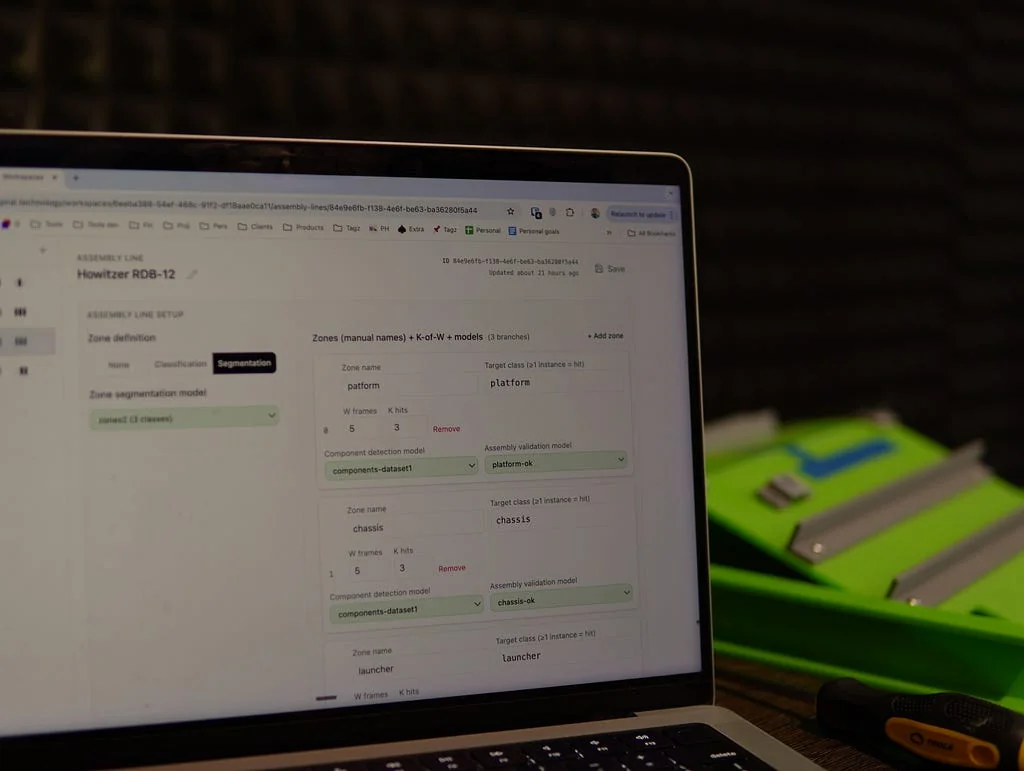

No-code configuration lets a quality lead compose an inspection flow through a visual interface: zones, models per zone, acceptance thresholds. When builds change, reconfiguration takes minutes.

On-device inspection gives the operator a pass/fail verdict in under two seconds, with specific failure reasons tied to the acceptance criteria. Every event is logged with full traceability.

The result is a system where a new part family goes from first requirements session to live inspection in roughly a week. Adding a new variant to an existing configuration takes about an hour.

Inventor configuration portal

The trade-offs — honestly

This is not a replacement for high-precision stationary machine vision. A handheld phone does not deliver sub-millimeter accuracy. There are no macro lenses, no telecentric optics, no controlled lighting rigs. Positional tolerance is approximately ±10 mm. Features below about 5 mm become unreliable to detect.

For many industrial inspection tasks — assembly verification, component presence, orientation checking, marking validation, FOD detection — that is more than sufficient. For tasks that require micrometer precision or magnification, it is not the right tool, and we say so.

The trade-off is explicit: we exchange peak precision for mobility, speed of deployment, and accessibility. In high-mix manufacturing, where the alternative is often a paper checklist and a flashlight, that exchange is overwhelmingly favorable.

Under the hood: a cascade, not a single model

Inventor doesn’t rely on one monolithic model. It runs a cascade of purpose-trained models in sequence: zone segmentation identifies which part of the assembly is in view, component detection classifies individual parts within each zone, and configuration validation checks the detected layout against the defined acceptance criteria. Surface anomaly detection, marking recognition, and final verdict generation each have their own stage.

This layered approach means each model stays narrow and accurate at its task, and the system can be updated at any layer without retraining the rest. We’ll go deeper on the architecture in a future article.

What comes next

This is the overview. In the coming weeks, we’ll publish focused pieces on individual layers of the pipeline — how one-shot labeling actually works, how synthetic failures are generated, and what the model cascade looks like in practice. If you followed the series last fall, consider this the bridge between the problem statement and the technical deep-dives.